The EU AI Act represents a fundamental shift in how organizations must govern artificial intelligence systems, moving from a purely innovation-driven approach to one where regulatory compliance and risk management are paramount. Internal audit functions now face a critical mandate to ensure their organizations navigate this complex regulatory landscape effectively, regardless of whether they operate within the European Union or serve EU markets from abroad.

This article examines the EU AI Act’s compliance requirements, explains how internal audit must evolve to address these obligations, and provides practical guidance for organizations seeking to align their AI governance frameworks with regulatory expectations.

Understanding the EU AI Act’s Regulatory Framework

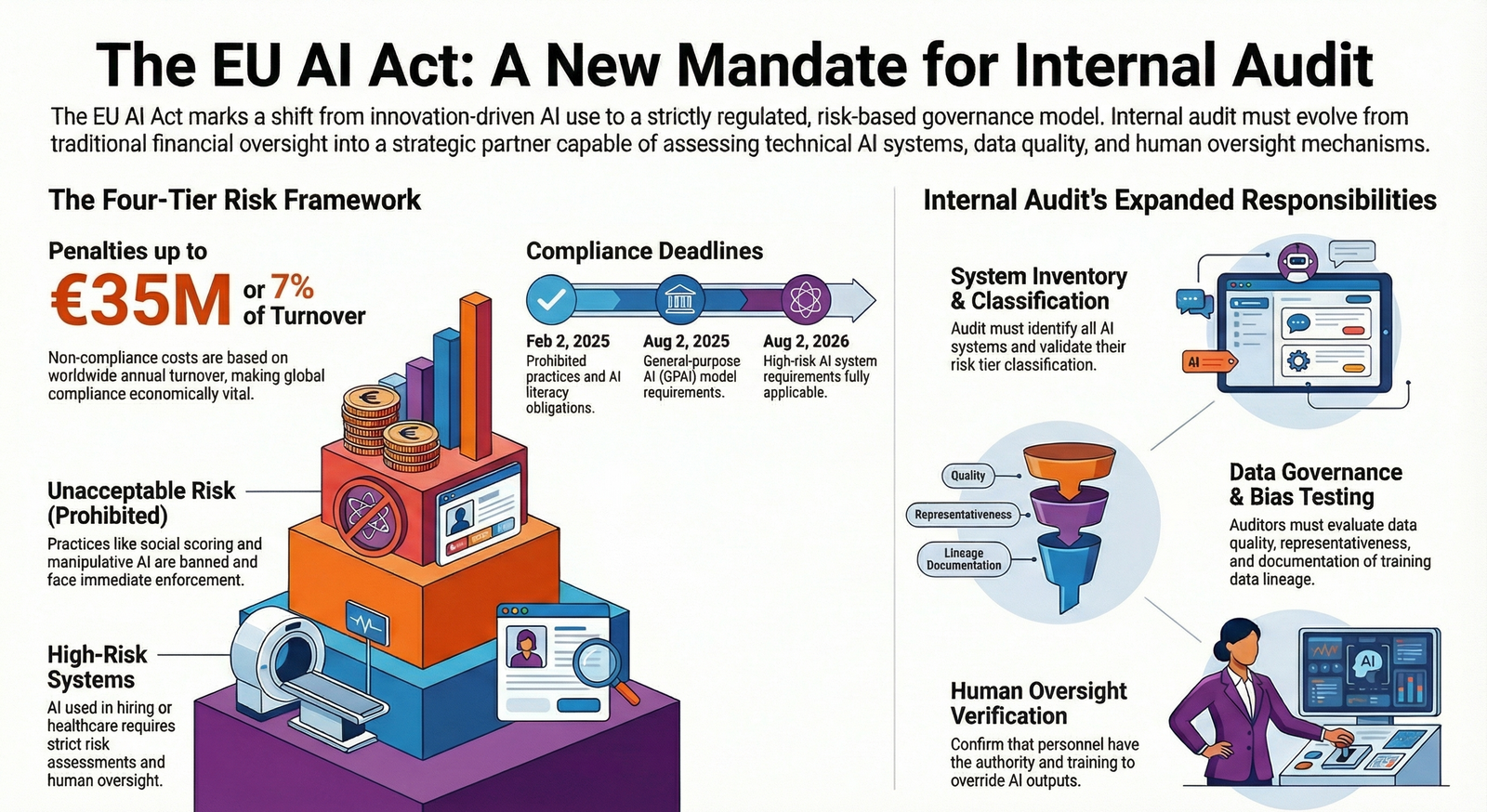

The EU AI Act entered into force on August 1, 2024, and represents the world’s first comprehensive regulatory framework for artificial intelligence. The regulation establishes a risk-based approach that classifies AI systems into distinct categories, each with specific compliance obligations. The framework applies not only to organizations based within the European Union but extends extraterritorially to any company offering AI-based products or services on the continent, regardless of where they are located.

The Act’s implementation follows a phased timeline.

- Prohibited AI practices and AI literacy obligations became applicable on February 2, 2025.

- General-purpose AI (GPAI) model requirements took effect on August 2, 2025.

- High-risk AI system requirements will be fully applicable by August 2, 2026, with some embedded systems receiving an extended transition period until August 2, 2027.

Organizations must understand that non-compliance carries substantial penalties, ranging from EUR 7.5 million or 1.5 percent of worldwide annual turnover to EUR 35 million or 7 percent of worldwide annual turnover, depending on the violation type.

The Four-Tier Risk Classification System

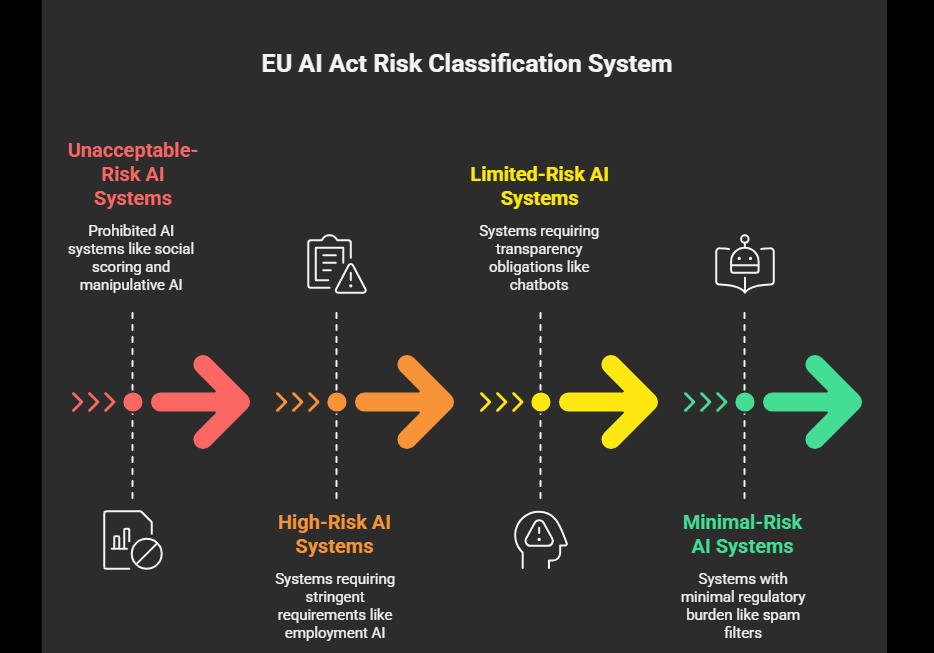

The EU AI Act establishes a risk-based regulatory approach with four distinct tiers that determine compliance obligations. Understanding these categories is fundamental for internal audit functions tasked with assessing organizational AI systems.

Unacceptable-risk AI systems are outright prohibited. These include government social scoring systems, manipulative AI that distorts behavior and impairs informed decision-making, systems exploiting vulnerabilities related to age or disability, untargeted facial recognition database creation, emotion recognition in workplaces or educational institutions, biometric categorization systems inferring protected characteristics, and real-time remote biometric identification in publicly accessible spaces for law enforcement without strict conditions.

Organizations deploying any of these systems face immediate enforcement action and maximum penalties. High-risk AI systems are subject to stringent requirements including thorough risk assessments, high-quality datasets, traceability measures, detailed documentation, human oversight, and robustness standards. For Example, AI technology used in employment and hiring procedures. These systems require comprehensive governance and continuous monitoring before market deployment.

Limited-risk AI systems, such as chatbots and deepfake detection tools, must meet specific transparency obligations. Developers and deployers must ensure end-users are aware they are interacting with AI, prevent illegal content generation, and publish summaries of copyrighted data used for training.

Minimal-risk AI systems, such as spam filters, face minimal regulatory burden and are permitted for free use.

Why This Moment Demands Internal Audit Evolution

Organizations face unprecedented pressure to demonstrate AI governance maturity as regulators worldwide increasingly adopt AI regulation frameworks modeled on the EU AI Act. The regulatory landscape is shifting from a penalty-based approach to preemptive regulation, requiring organizations to proactively identify, assess, and manage AI risks before deployment.

Internal audit functions have historically focused on financial controls and operational compliance. However, the EU AI Act requires organizations to establish enterprise-wide AI governance programs involving boards of directors, C-suites, compliance professionals, and team managers.

Internal audit must evolve from a reactive oversight function to a strategic partner in AI governance, capable of assessing technical AI systems, evaluating data quality and bias, and ensuring human oversight mechanisms are functioning effectively. This transformation is essential because regulators expect organizations to demonstrate continuous compliance monitoring and incident reporting capabilities.

Additionally, the extraterritorial reach of the EU AI Act means that even organizations headquartered outside Europe must comply if they serve EU customers or process EU data. This global applicability creates compliance obligations that internal audit functions cannot ignore, regardless of their organization’s primary market focus.

Impact on Organizational Governance and Risk Management

The EU AI Act creates multiple operational, legal, and governance consequences that internal audit functions must address. Organizations must now maintain regularly updated inventories of all AI systems in development or deployment, assess which systems fall within the Act’s scope, and identify their risk classification and relevant compliance obligations.

Key compliance obligations include:

- Ensuring sufficient AI literacy among staff and personnel involved in AI system operation and deployment, considering their technical knowledge, experience, education, and training.

- Conducting and documenting risk assessments for high-risk AI systems before deployment.

- Implementing data governance frameworks ensuring clean, reliable, and representative training data, particularly for sensitive domains like finance, healthcare, and retail.

- Establishing human oversight mechanisms and traceability measures for high-risk systems.

- Creating documentation procedures demonstrating compliance with transparency and technical requirements.

- Registering high-risk AI systems in public databases as required by national competent authorities.

- Tracking, documenting, and reporting serious incidents to the AI Office and relevant authorities without undue delay.

Internal audit functions must develop assessment capabilities to evaluate whether these obligations are being met consistently across the organization. This includes assessing whether data governance practices support compliance, whether risk assessments are conducted with appropriate rigor, and whether incident reporting mechanisms function effectively.

Enforcement Direction and Regulatory Signals

- The European Commission has published the General-Purpose AI Code of Practice to help providers demonstrate compliance, indicating that regulators expect organizations to adopt industry best practices and maintain transparent compliance documentation.

- National Competent Authorities are establishing AI regulatory sandboxes by August 2, 2026, providing testing environments for organizations to validate compliance approaches.

- Regulators are signaling that compliance will be assessed through documentation review, incident investigation, and assessment of governance frameworks.

- Organizations demonstrating proactive compliance efforts, transparent risk management, and genuine commitment to responsible AI development will face lower enforcement risk than those appearing to treat compliance as a checkbox exercise.

- Internal audit functions that document their assessment activities and provide evidence of continuous monitoring will strengthen their organization’s compliance posture.

- Leading organizations are investing significantly in AI governance infrastructure, data quality improvements, and compliance documentation systems. Organizations that delay compliance preparation face competitive disadvantage as regulatory scrutiny intensifies and penalties become more common.

Internal Audit’s Expanded Compliance Responsibilities

Internal audit functions must assume several critical responsibilities to ensure organizational compliance with the EU AI Act. These responsibilities extend beyond traditional audit activities and require developing new technical competencies and assessment methodologies.

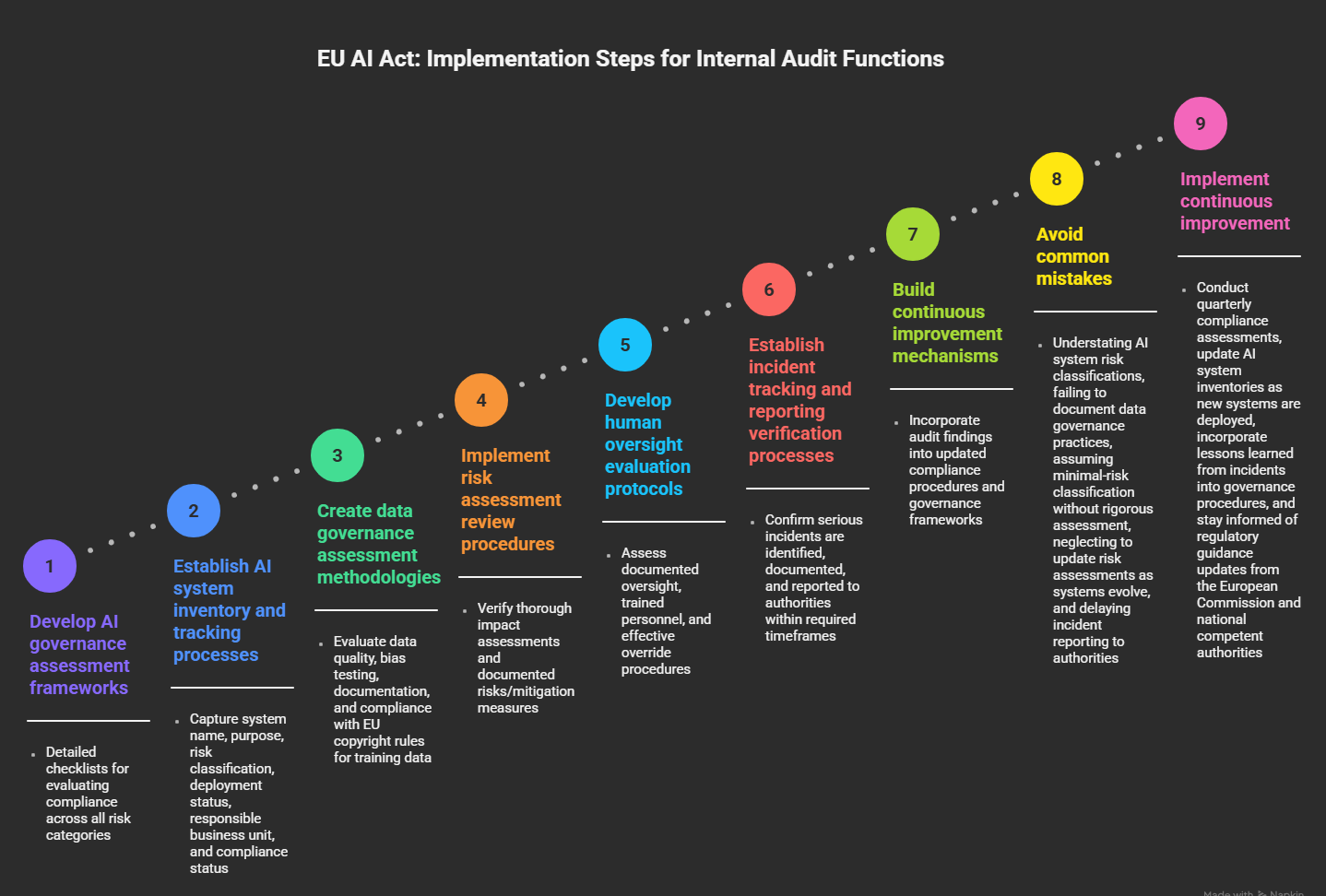

- Internal audit must Conduct comprehensive AI system inventories and risk classification assessments.

- This involves identifying all AI systems in use, understanding their functionality and data inputs, classifying them according to the EU AI Act’s risk tiers, and documenting compliance obligations for each system. Internal audit should validate that business units have accurately classified their AI systems and have not understated risk levels to avoid compliance burdens.

- Internal audit must assess whether organizations have implemented adequate data governance frameworks.

- This includes evaluating data quality, representativeness, bias testing, and documentation of training data sources. For high-risk systems, internal audit should verify that organizations maintain detailed records of data lineage and can demonstrate that training data meets quality standards.

- Internal audit must evaluate risk assessment and mitigation processes for high-risk AI systems.

- This involves reviewing whether organizations have conducted thorough impact assessments, documented identified risks, and implemented appropriate mitigation measures. Internal audit should assess whether risk assessments are updated regularly and whether new risks identified during system operation are documented and addressed.

- Internal audit must verify that human oversight mechanisms are functioning effectively. For high-risk systems, the EU AI Act requires meaningful human oversight to ensure AI system outputs are reviewed and validated before deployment in consequential decisions.

- Internal audit should assess whether oversight procedures are documented, whether personnel conducting oversight have appropriate training and authority, and whether override mechanisms function effectively.

- Internal audit must monitor incident reporting and response processes. Organizations must track and report serious incidents to the AI Office and national competent authorities without undue delay.

- Internal audit should verify that incident identification mechanisms are in place, that serious incidents are escalated appropriately, and that incident reports are submitted within required timeframes.

Practical Implementation Steps for Internal Audit Functions

Organizations should implement the following practical steps to ensure internal audit functions can effectively support EU AI Act compliance:

Internal audit should also establish working relationships with business units responsible for AI system development and deployment. Regular communication ensures internal audit understands system functionality, data inputs, and deployment contexts necessary for accurate risk assessment. Internal audit should also coordinate with compliance, legal, and information security functions to ensure comprehensive governance coverage.

Organizations should invest in training internal audit personnel on AI system functionality, data science concepts, and bias assessment methodologies. While internal audit need not develop deep technical expertise in machine learning, understanding AI system basics is essential for conducting meaningful assessments. Many organizations are engaging external AI governance specialists to supplement internal audit capabilities during initial compliance implementation.

The EU AI Act’s emphasis on documentation and transparency creates opportunities for internal audit to strengthen organizational governance. By establishing clear compliance documentation standards and verifying that organizations maintain comprehensive records of risk assessments, data governance practices, and incident responses, internal audit can demonstrate the organization’s commitment to responsible AI development and reduce enforcement risk.

The regulatory landscape will continue evolving as national competent authorities issue implementation guidance and enforcement priorities become clearer. Internal audit functions should establish processes for monitoring regulatory developments, updating assessment methodologies as guidance is published, and communicating compliance implications to organizational leadership.

The EU AI Act represents a watershed moment in AI governance, establishing standards that are likely to influence regulations globally as other countries develop their own AI frameworks. Organizations that invest in robust internal audit capabilities now will be better positioned to navigate emerging regulations in other jurisdictions and maintain competitive advantage as AI governance becomes a core organizational competency. Internal audit’s evolution from traditional compliance oversight to strategic AI governance partner is not optional but essential for organizational success in a regulated AI environment.

FAQ

1. Does the EU AI Act apply to organizations outside the European Union?

Ans: Yes, the EU AI Act has extraterritorial reach and applies to any organization offering AI-based products or services in the EU market, regardless of where the organization is headquartered. This means US companies, Asian companies, and organizations from any country must comply if they serve EU customers or process EU data. Organizations cannot avoid compliance by locating operations outside Europe if they maintain any commercial presence in EU markets.

2. What are the maximum penalties for non-compliance with the EU AI Act?

Ans: Penalties range from EUR 7.5 million or 1.5 percent of worldwide annual turnover for certain violations to EUR 35 million or 7 percent of worldwide annual turnover for the most serious violations, such as deploying prohibited AI systems. These penalties are calculated on global revenue, not just EU revenue, making compliance economically significant even for organizations with limited EU market exposure. Beyond financial penalties, organizations face reputational damage, loss of customer trust, and potential exclusion from EU markets.

3. What specific AI practices are completely banned under the EU AI Act?

Ans: The EU AI Act prohibits eight specific AI practices deemed unacceptable risk: harmful AI-based manipulation and deception, harmful exploitation of vulnerabilities, government social scoring, individual criminal offense risk assessment or prediction, untargeted scraping of the internet or CCTV material to create facial recognition databases, emotion recognition in workplaces and educational institutions, biometric categorization to deduce protected characteristics, and real-time remote biometric identification for law enforcement in publicly accessible spaces without strict conditions. Organizations must immediately cease any deployment of these systems and cannot continue operating them even with modifications.

4. What is the timeline for full EU AI Act compliance?

Ans: The EU AI Act follows a phased implementation timeline. Prohibited AI practices and AI literacy obligations became applicable on February 2, 2025. General-purpose AI model requirements became applicable on August 2, 2025. High-risk AI system requirements will be fully applicable by August 2, 2026, with some embedded systems receiving an extended transition period until August 2, 2027. Organizations should prioritize compliance with currently applicable requirements while preparing for upcoming deadlines.

5. What role should internal audit play in EU AI Act compliance?

Ans: Internal audit functions must evolve from traditional compliance oversight to strategic AI governance partners. Key responsibilities include conducting AI system inventories and risk classifications, assessing data governance frameworks, evaluating risk assessment processes, verifying human oversight mechanisms, and monitoring incident reporting and response. Internal audit should develop assessment capabilities to evaluate whether organizations are meeting compliance obligations consistently across all AI systems and should establish continuous improvement processes that incorporate audit findings into updated governance frameworks.

6. How should organizations classify their AI systems under the EU AI Act?

Ans: Organizations must classify AI systems into four risk categories: unacceptable-risk (prohibited), high-risk (subject to strict requirements), limited-risk (subject to transparency obligations), and minimal-risk (largely unregulated). Classification depends on the AI system’s intended purpose, the potential harm it could cause, and the vulnerability of affected populations. Organizations should conduct thorough impact assessments to support their risk classifications and should document the rationale for each classification decision. Internal audit should verify that classifications are accurate and that organizations have not understated risk levels to avoid compliance burdens.

7. What data governance practices does the EU AI Act require?

Ans: The EU AI Act requires organizations to maintain clean, reliable, and representative training data, particularly for high-risk AI systems used in sensitive domains like finance, healthcare, and retail. Organizations must document data sources, conduct bias testing, ensure data quality, and maintain records demonstrating compliance with EU copyright rules. For limited-risk systems like chatbots, organizations must publish summaries of copyrighted data used for training. Internal audit should assess whether data governance frameworks support these requirements and whether organizations maintain adequate documentation of data practices.

8. What is the difference between high-risk and limited-risk AI systems?

Ans: High-risk AI systems, such as those used in employment and hiring procedures, are subject to stringent requirements including thorough risk assessments, high-quality datasets, traceability measures, detailed documentation, human oversight, and robustness standards. Limited-risk AI systems, such as chatbots, must meet specific transparency obligations requiring that end-users be aware they are interacting with AI and that AI-generated content be clearly labeled. High-risk systems require more extensive governance infrastructure and ongoing monitoring, while limited-risk systems primarily require transparency disclosures.

9. How should organizations respond to serious AI system incidents under the EU AI Act?

Ans: Organizations must track, document, and report serious incidents to the AI Office and relevant national competent authorities without undue delay. A serious incident is one that causes or could cause significant harm to individuals’ safety, rights, or fundamental freedoms. Organizations should establish incident identification and escalation procedures, maintain incident documentation, and ensure that incident reports are submitted within required timeframes. Internal audit should verify that incident reporting mechanisms function effectively and that organizations are not delaying incident disclosure.

10. What is the General-Purpose AI Code of Practice and how does it relate to compliance?

Ans: The European Commission published the General-Purpose AI Code of Practice in July 2025 to help providers of GPAI models demonstrate compliance with the EU AI Act. The Code provides guidance on implementing transparency requirements, conducting model evaluations, assessing and mitigating systemic risks, and ensuring cybersecurity protection. While the Code is not legally binding, organizations that follow its guidance demonstrate good faith compliance efforts and reduce enforcement risk. Organizations should review the Code and assess whether their GPAI practices align with its recommendations.

2 thoughts on “EU AI Act: How internal audit must respond to the Act”