AI governance establishes systematic frameworks for responsible AI development, deployment, and monitoring across enterprises. This approach addresses ethical concerns, security vulnerabilities, and compliance needs as AI adoption accelerates globally. Businesses implementing strong AI governance reduce risks while unlocking innovation potential.

This article examines the regulatory landscape, business impacts, compliance strategies, and practical steps for AI governance. You will learn how to integrate governance into operations for sustainable growth and competitive advantage in 2026. Key frameworks and best practices provide actionable guidance for immediate application.

Regulatory Landscape

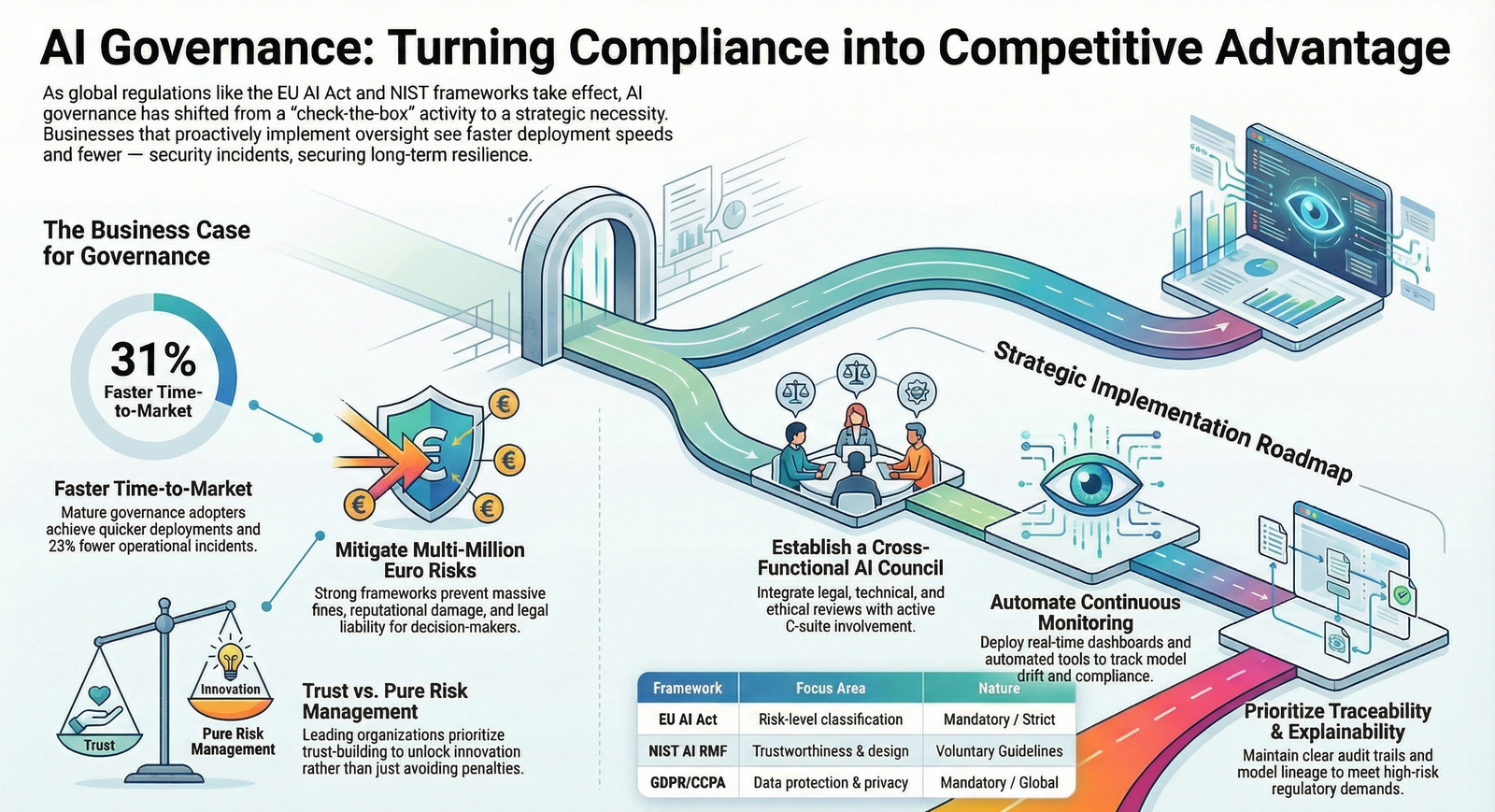

Key frameworks and laws: The European Union AI Act classifies AI systems by risk levels, imposing strict requirements on high-risk applications including transparency and human oversight. In the US, the NIST AI Risk Management Framework offers voluntary guidelines for managing AI risks to individuals, organizations, and society. NIST AI RMF promotes trustworthiness in AI design and evaluation. Globally, regulations like GDPR and CCPA enforce data protection, while emerging laws target AI-specific biases and discrimination.

Regulators such as the European Commission and US federal agencies oversee enforcement, with penalties for non-compliance reaching millions in fines. Frameworks emphasize fairness, accountability, and continuous monitoring to align AI with societal values.

Policy shifts and drivers: Rapid AI advancement outpaced regulations, prompting governments to introduce frameworks like the EU AI Act to mitigate harms from biased or opaque systems. Economic pressures for innovation collide with national security concerns, as seen in America’s AI Action Plan favoring light-touch governance over bureaucratic rules.

Historical developments from Biden’s Executive Order 14110 to deregulatory pushes reflect volatile landscapes, shifting responsibility to private sectors for self-governance. This moment matters as 2026 regulations take effect, demanding proactive enterprise action amid fragmented state-level rules.

Operational and legal consequences: Businesses face fines, reputational damage, and deployment halts for non-compliant AI, with governance lapses amplifying biases or security breaches.

- Financial penalties under EU AI Act for high-risk systems violations.

- Legal liability for individuals in decision-making roles without clear accountability structures.

- Governance gaps disrupt scalability, increasing audit costs and rework.

Organizational decisions require cross-functional oversight, while individuals gain defined responsibilities, fostering shared accountability and reducing personal exposure.

Enforcement trends prioritize high-risk AI in sectors like healthcare and finance, with regulators demanding traceability and explainability. Industries respond by forming AI ethics committees and adopting unified GRC platforms for real-time monitoring. Market analysis shows mature governance adopters achieve 31% faster time-to-market and 23% fewer incidents. Expert commentary from Deloitte highlights trust-building over pure risk management for balanced outcomes.

Compliance Expectations & Best Practices

Core compliance steps: Organizations must conduct risk assessments, document model lineage, and establish oversight committees aligning with NIST and EU standards.

- Implement policies for data sourcing, bias detection, and model validation.

- Integrate cross-functional teams for legal, technical, and ethical review.

- Enable continuous monitoring with automated tools for drift and compliance.

- Prioritize transparency through explainability and audit trails.

Practical Requirements

Organizations need centralized platforms for AI inventory, risk scoring, and vendor assessments to operationalize governance.

- Form AI governance councils with C-suite involvement for strategic alignment.

- Deploy dashboards for real-time performance tracking and anomaly alerts.

- Conduct pre-deployment audits and post-launch feedback loops.

- Avoid common mistakes like siloed teams, unmonitored models, or ignoring third-party risks.

For continuous improvement, schedule regular framework reviews against evolving regulations, train staff on ethical AI, and benchmark against industry leaders using metrics like incident reduction and deployment speed. Leverage AI-powered tools for automated compliance testing and predictive risk modeling to stay agile.

As AI integrates deeper into operations, governance evolves into a strategic enabler, with 2026 marking heightened enforcement and innovation opportunities. Emerging standards demand proactive adaptation, positioning compliant leaders for sustained ROI amid global scrutiny. Businesses prioritizing AI governance today secure resilience and trust for tomorrow’s growth.

FAQ

1. What exactly constitutes AI governance in a business context?

Ans: AI governance encompasses policies, processes, and structures that oversee AI development, deployment, and monitoring, ensuring ethical use, risk mitigation, and alignment with regulations like the EU AI Act and NIST frameworks.

2. How does AI governance impact ROI for enterprises in 2026?

Ans: It accelerates deployment by 31%, reduces incidents by 23%, lowers audit costs, and builds trust, converting compliance into scalable innovation and market advantage.

3. What are the penalties for failing to implement AI governance?

Ans: Non-compliance risks multimillion-euro fines under EU AI Act, US regulatory actions, reputational harm, and operational disruptions from biased or insecure AI systems.

4. Which industries face the highest AI governance requirements?

Ans: High-risk sectors like healthcare, finance, and insurance require stringent oversight due to life-altering decisions, demanding traceability, fairness, and continuous monitoring.

5. How can small businesses start building AI governance?

Ans: Begin with risk assessments, adopt NIST AI RMF, form cross-functional committees, and use automated tools for monitoring to scale responsibly without heavy resources.

6. What role does third-party AI play in governance?

Ans: Organizations must assess vendor compliance, enforce contracts for transparency, and monitor supply chain risks to avoid indirect liabilities from external AI tools.

EU AI Act: How internal audit must respond to the Act

AI in Recruitment : Compliance Revolution in 2025 – A Comprehensive Guide for Employers

GLBA Implementation : Step-by-step Guide for Financial institutions